Blocking Bots Considered Harmful

To Serve and Protect

One of the key responsibilities of infrastructure teams is protecting services from unwanted traffic. We implement various mechanisms to counter synthetic HTTP requests: rate-limiting, geo-blocking, Web Application Firewalls, challenges, CAPTCHAs. We routinely analyze logs, respond to traffic spikes, and prevent scraping. We ensure our services are primarily accessed by humans and a limited group of trusted bots.

The principle has always been simple: the web should be built for humans.

Is this principle still relevant today?

More and more content is consumed by language models. They thrive on the knowledge they can access. By blocking these bots, we effectively exclude our services from being indexed and represented in these increasingly important information sources.

Don’t make yourself invisible.

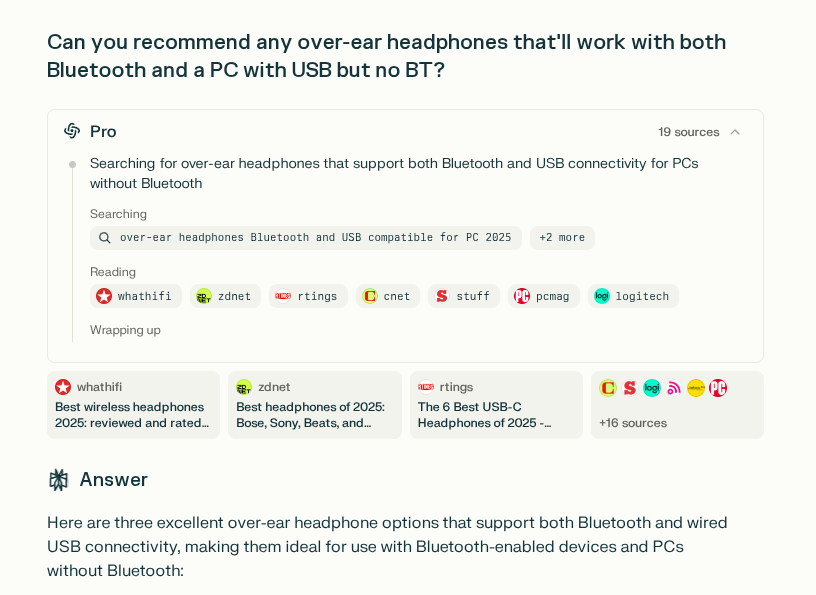

An excellent example illustrating this issue comes from perplexity.ai:

Perplexity scours the web to provide the response. Every word of came from some input data. The same applies to other models, even if they don’t emphasize their sources. Neither of them get data from the sources that actively block them.

In the context of the search, if there is a specific company or product mentioned, it’s only because it was sucesfully indexed. And what’s missing? Companies that decided that their pages should be protected by some bot protection, becaming invisible to automated web.

Therefore,

accessibility of your website to bots can become a competitive advantage. Launching a new site with bot protection immediately weakens its market position.

Are you ready to be indexed?

Not every webpage can safely allow unrestricted bot traffic. Most would likely crumble under the load. The technology exists to handle this challenge, but it may be the last moment to adapt and prepare for the increase in automated traffic.

Static websites, separated APIs and bot-friendly interfaces are becoming essential. This may seem similar to Search Engine Optimization (SEO) but we’re optimizing for something different here. Let’s call it AIO.

Articial Intelligence Optimization (AIO)

To prepare your site for widespread bot indexing, consider the following strategies:

Ensure accessibility. No more captchats, challenges, fronts that require large amounts of javascripts. Follow accessibility guidelines like WCAG, EAA, which benefit both humans and bots.

Separate dynamic from static content. Make informational parts static. Serve them from services designed to handle unlimited traffic.

Revise your robots.txt approach. Don’t maintain a whitelist of bots. The landscape changes too rapidly. Maintain a blacklist if you need.

Maintain essential security. Keep rate-limiting, WAF on dynamic elements and ability to blacklist (not whitelist!) problematic sources.

Validate your AI presence. Regularly check whether AI models can access your content. Test whether your site appears in AI-generated responses for relevant queries.

Monitor access logs. Verify that bot requests are handled properly and not being blocked unintentionally.

Embrace the future

Stop worrying about bot traffic and start embracing automated visitors. They are proxies for your customers and represent how many people will discover your content, your products and your services in the AI-driven future.

By making your web presence AI-friendly today, you’re securing your visibility in tomorrow’s information landscape.